If you’ve ever built an AI agent…

You’ve probably seen this 👇

🤖 It retries endlessly

🤖 Calls the same failing API again and again

🤖 “Thinks”… but goes nowhere

And worst of all?

👉 It never knows when to stop

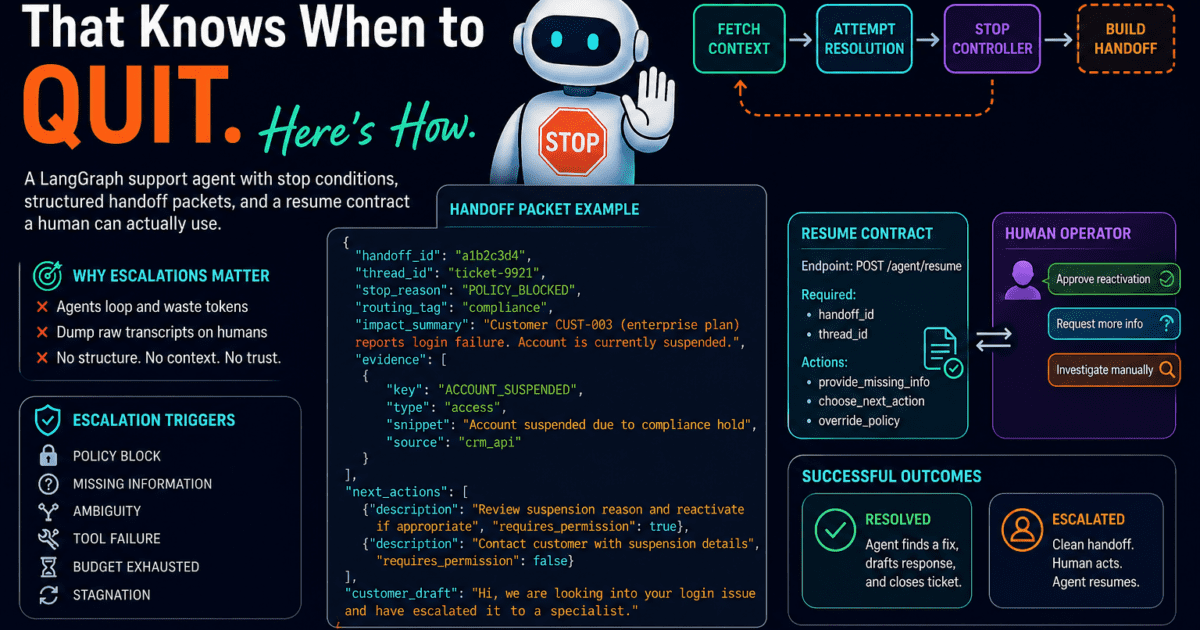

💥 The Hidden Problem Nobody Talks About

Most AI agents don’t fail because they’re dumb.

They fail because they don’t know when they’re stuck.

Instead of saying:

“I need help”

They:

- Loop forever 🔁

- Burn tokens 💸

- Confuse humans 🤯

- Dump logs instead of insights

That’s when I realized something powerful:

👉 Escalation is not failure. It’s architecture.

🚨 The Breakthrough: Teach Agents to Quit (Properly)

So I built something different.

Not just a smart agent…

But a disciplined agent that knows:

✔ When it’s blocked

✔ When it lacks permissions

✔ When data is missing

✔ When it’s wasting time

And most importantly:

👉 When to stop and hand over cleanly

🧠 The Shift: From “Trying Harder” → “Deciding Smarter”

Most systems do this:

Run → Fail → Retry → Retry → Retry → Panic

Mine does this:

Run → Analyze → Decide

↓

If blocked → STOP → ESCALATE → HANDOFF

That one decision layer changes everything.

🧩 What a Good AI Agent Does When It’s Stuck

Instead of chaos…

It produces a structured handoff packet:

- What happened

- Why it stopped

- What it tried

- What’s needed next

- Suggested actions

- Customer-safe response

👉 Humans get clarity. Not noise.

⚖️ Approvals vs Escalations (Critical Distinction)

Most teams get this wrong.

✅ Approval

Agent knows what to do → needs permission

“Should I execute this?”

🚨 Escalation

Agent doesn’t know what to do safely

“You need to take over”

Mix these up and you either:

- Spam humans 🤦♂️

- Or create unsafe automation 😬

🏗️ The Architecture (LangGraph Flow)

Fetch Context → Attempt Resolution → Stop Controller → Handoff Builder

Each piece has a strict responsibility.

🧠 1. Agent State (The System Contract)

This is the backbone of everything.

from typing import Annotated, TypedDict

def merge_evidence(existing: list[dict], new: list[dict]) -> list[dict]:

evidence_by_key = {e["key"]: e for e in existing}

for e in new:

evidence_by_key[e["key"]] = e

return list(evidence_by_key.values())

class AgentState(TypedDict):

ticket: dict

category: str

account: dict | None

evidence: Annotated[list[dict], merge_evidence]

evidence_keys: list[str]

step_count: int

max_steps: int

stagnation_count: int

last_evidence_count: int

outcome: str

stop_reason: str | None

💡 Why this matters:

- Enables traceable reasoning

- Enables stagnation detection

- Keeps state lightweight + serializable

🔍 2. Attempt Resolution (Decision Engine)

This is where your agent decides:

👉 “Can I solve this — or should I stop?”

def attempt_resolution(state: AgentState) -> dict:

if state.get("stop_reason"):

return {}

keys = state.get("evidence_keys", [])

if "ACCOUNT_SUSPENDED" in keys:

return {

"stop_reason": "POLICY_BLOCKED",

"step_count": state["step_count"] + 1

}

if "INVOICE_OVERDUE" in keys:

return {

"stop_reason": "POLICY_BLOCKED",

"step_count": state["step_count"] + 1

}

return {

"stop_reason": "MISSING_INFO",

"step_count": state["step_count"] + 1

}

👉 This is not testing logic.

👉 This is decision architecture.

🚦 3. Stop Controller (The Real Brain)

Most AI systems fail here.

This is what prevents infinite loops.

def stop_controller(state: AgentState) -> dict:

step = state.get("step_count", 0)

if step >= state.get("max_steps", 15):

return {"stop_reason": "BUDGET_EXCEEDED"}

curr_key_count = len(state.get("evidence_keys", []))

last_key_count = state.get("last_evidence_count", 0)

stag = state.get("stagnation_count", 0)

if curr_key_count == last_key_count:

stag += 1

else:

stag = 0

if stag >= 3:

return {"stop_reason": "STAGNATION"}

return {

"stagnation_count": stag,

"last_evidence_count": curr_key_count

}

💡 This enables:

- Loop detection

- Budget control

- Intelligent stopping

🚨 Escalation Triggers (Real-World Logic)

Your agent must recognize failure modes:

- 🔒 Policy Block

- ❓ Missing Information

- 🤯 Ambiguity

- 🔧 Tool Failure

- ⏳ Budget Exhausted

- 🔁 Stagnation

👉 This is what separates demos from production systems.

📦 4. Handoff Packet (Your Killer Feature 🔥)

When escalation happens:

👉 The agent packages everything cleanly

def build_handoff(state: AgentState) -> dict:

return {

"handoff_id": "generated_id",

"thread_id": state["thread_id"],

"stop_reason": state.get("stop_reason"),

"routing_tag": "compliance",

"impact_summary": "Customer issue detected",

"evidence": state.get("evidence", []),

"next_actions": [

{"description": "Investigate manually", "requires_permission": False}

],

"customer_draft": "Hi, we are looking into your issue."

}

💡 Result:

- No raw logs

- No confusion

- Just actionable insight

🔄 5. Resume Contract (Where Most Systems Fail)

This is what makes your system next-level.

The agent doesn’t restart blindly.

👉 It resumes with context.

def resume_from_packet(graph, thread_id: str, human_input: str):

config = {"configurable": {"thread_id": thread_id}}

current_state = graph.get_state(config)

update_data = {

"stop_reason": None,

"outcome": "running",

"resume_input": {"payload": human_input}

}

graph.update_state(config, update_data)

return graph.invoke(None, config)

💡 This enables:

- Human + AI collaboration

- No lost context

- Faster resolutions

⚔️ Real Scenario

Customer:

“I can’t log in”

System finds:

👉 Account is suspended

❌ Typical Agent

- Retries login

- Blames system

- Confuses user

✅ This Agent

- Detects suspension

- Recognizes policy block

- Stops immediately

- Escalates with context

👉 That’s the difference between:

Automation vs Engineering

🔐 Production Reality (What Most Blogs Skip)

You must handle:

🧑🤝🧑 Concurrency

Multiple humans clicking “resume”

→ Use idempotency keys

⏱️ Stale Data

Ticket updated after escalation

→ Reject outdated actions

🔌 System Failures

APIs down

→ Escalate intelligently

👉 This is where real systems break.

📈 Real Impact

After implementing this:

🔻 Reduced agent loops

💸 Lower token costs

🚀 Faster resolutions

😌 Happier support teams

And most importantly:

👉 Humans get answers — not logs

🧠 The Big Insight

You don’t need an agent that solves everything.

You need an agent that:

✔ Solves what it can

✔ Stops when it should

✔ Explains clearly

✔ Hands off cleanly

🎯 Final Thought

Everyone is trying to build:

🤖 “Smarter agents”

But the real unlock is:

👉 More disciplined agents

Because in real systems…

Knowing when to stop

is more valuable than trying forever.