Software testing is undergoing a fundamental shift.

For years, QA engineers relied on hardcoded assertions to validate API responses. It worked well when systems were simple and predictable.

But modern APIs are no longer static.

They are dynamic, context-aware, and often influenced by AI-driven logic.

And that’s exactly why traditional assertions are starting to break down.

The Problem with Hardcoded Assertions

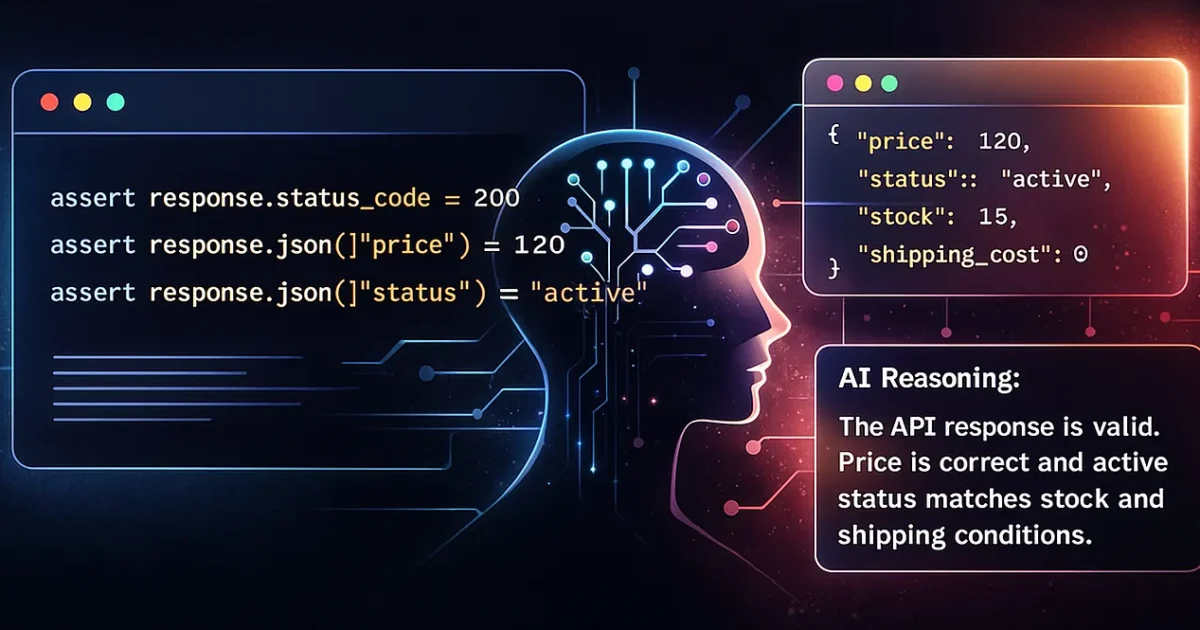

A typical API test still looks like this:

assert response.status_code == 200

assert response.json()["price"] == 120

assert response.json()["status"] == "active"

Clean. Simple. Easy to read.

But also fragile.

Why?

Because these assertions assume:

- Fixed values

- Stable business rules

- Predictable responses

None of which are guaranteed anymore.

Why Static Checks No Longer Work in Modern Systems

1. Business Logic Changes Frequently

Small updates can break hundreds of tests.

Example:

- Pricing logic updated

- New optional fields introduced

- Status transitions modified

Your tests fail—not because the API is wrong, but because your expectations are outdated.

2. Lack of Context Awareness

Consider this response:

{ "status": "pending_verification" }

A static assertion expecting "active" will fail.

But in reality:

- The user may be in a valid onboarding flow

- KYC verification may be in progress

The system is correct.

The test is wrong.

3. High Maintenance Overhead

Hardcoded assertions lead to:

- Large test files

- Frequent updates

- Repetitive changes across multiple tests

Every business change = multiple commits and rework.

4. Inability to Detect Behavioral Issues

Static assertions validate values, not logic.

They cannot detect:

- Logical inconsistencies

- Invalid state transitions

- Unexpected side effects

- Semantic violations (e.g., negative pricing)

They check data correctness, not behavior correctness.

Enter AI Reasoning-Based Validation

This is where things change.

Instead of validating fixed values, AI validates intent and behavior.

Using an LLM-powered validator, your test becomes:

reasoning = llm.reason(

expected_behavior="""

Price must always be >= 0.

If user is premium, shipping_cost = 0.

Stock cannot be negative.

Status should match inventory conditions.

""",

actual_response=response.json()

)

assert reasoning.is_valid

print(reasoning.explanation)

What This Looks Like in Practice

When Everything Is Correct

AI Reasoning:

The API response is valid.

User is premium, so shipping_cost = 0 is correct.

Price and stock are within expected ranges.

Status matches inventory rules.

When a Bug Exists

AI Reasoning:

Stock is -2 which violates the rule: "Stock cannot be negative."

All other fields are valid.

What AI Reasoning Adds That Assertions Cannot

Contextual Understanding

AI interprets rules like:

“If order is delivered, delivery_date must exist.”

This goes beyond simple equality checks.

Dynamic Validation

Instead of comparing fixed values, AI:

- Reads the rules

- Reads the response

- Evaluates correctness

Human-Readable Explanations

Instead of:

AssertionError: expected 200 but got 202

You get:

202 Accepted is valid because the operation is asynchronous.

Adaptability to Change

Update the rule once:

Usage limit for premium users = 150

All tests adapt automatically.

No need to rewrite assertions.

Detection of Hidden Issues

AI can identify:

- Missing required fields

- Contradictory states

- Unexpected new fields

- Invalid enum transitions

- Illogical timestamps

This is semantic validation, not just syntactic checking.

Real Comparison: Old vs New Approach

Traditional Assertions

assert response.json()["status"] == "active"

assert response.json()["plan"] == "premium"

assert response.json()["usage"] <= 100

AI Reasoning-Based Validation

rules = """

If user is premium:

- Status must be active or trialing

- Usage limit is 150

- Suspended accounts cannot have premium active

"""

reasoning = llm.validate(rules, response.json())

assert reasoning.pass

print(reasoning.explanation)

Why This Matters for QA Teams

Reduced Maintenance

Up to 70% fewer updates when business logic changes.

Increased Coverage

AI validates more scenarios than explicitly written tests.

Smarter Test Suites

Fewer tests, but deeper validation.

Better Bug Detection

AI catches:

- Logical contradictions

- Behavioral anomalies

- Edge cases humans often miss

How to Implement This in Your Framework

Step 1: Centralize Business Rules

Store rules in:

- YAML

- Markdown

- Vector databases

Step 2: Create an AI Validation Layer

def ai_assert(rules, response):

return llm.validate(rules, response)

Step 3: Replace Static Assertions

Move from:

assert response.json()["status"] == "active"

To:

assert ai_assert(rules, response.json()).pass

Final Thoughts

Static assertions were built for a simpler era.

Today’s APIs:

- Personalize responses

- Adapt to user behavior

- Evolve continuously

Testing must evolve too.

This is not just automation anymore.

This is reasoning-driven quality engineering.