LangChain version langchain-core==0.3.86 was officially released on May 07, 2026.

At first glance, the release notes look small:

release(core): 0.3.86

fix(core): backport path-traversal fix to v0.3

(CVE-2026-34070, GHSA-qh6h-p6c9-ff54)

And many developers will probably think:

👉 “Just another patch release.”

👉 “Nothing major.”

👉 “Can upgrade later.”

That mindset is dangerous.

Because this release is not about features.

It’s about security.

And security fixes in AI systems are no longer “optional maintenance.”

They are production-critical engineering work.

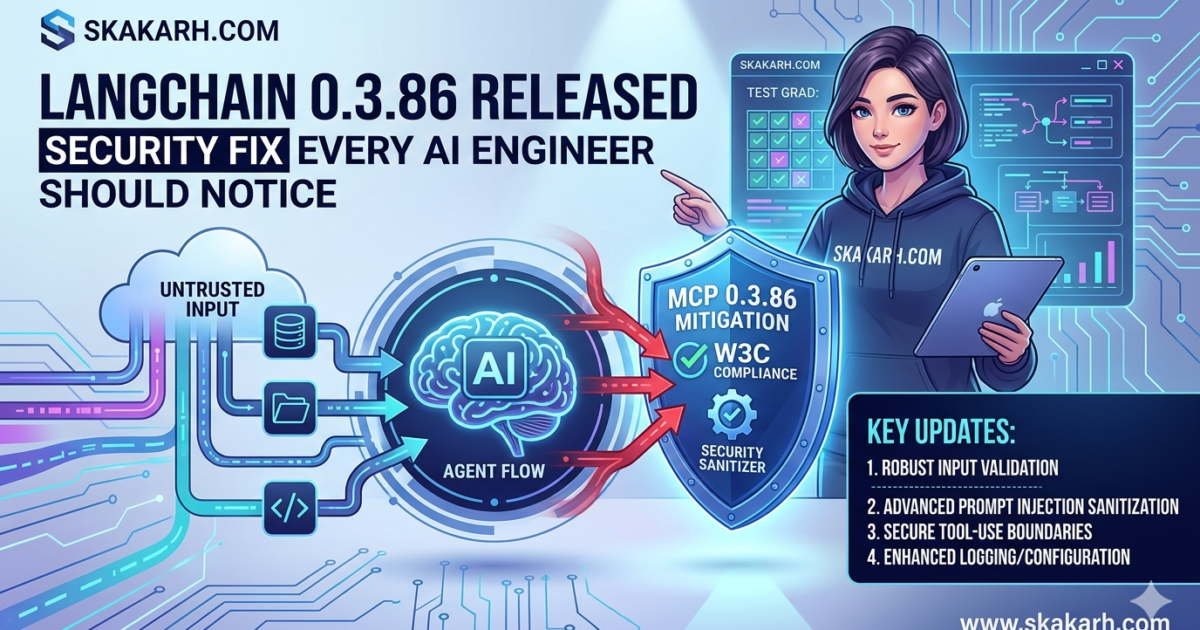

What Actually Changed in LangChain 0.3.86?

The most important update is:

Path Traversal Vulnerability Fix

LangChain patched:

CVE-2026-34070GHSA-qh6h-p6c9-ff54

This is significant because:

👉 Path traversal vulnerabilities can allow unintended file access.

In simple terms:

A malicious input could potentially manipulate file paths and access files outside intended directories.

Why This Matters MORE in AI Systems

Traditional applications already struggle with path traversal issues.

But AI systems introduce something even riskier:

👉 Dynamic inputs

👉 Autonomous agents

👉 Tool execution

👉 File operations driven by prompts

That changes the threat model completely.

The Dangerous Combination

Modern AI agents often have access to:

- File systems

- Temporary workspaces

- Code execution

- Upload/download tools

Now imagine:

agent.run("Read ../../sensitive_file")If guardrails are weak…

👉 Your AI system can unintentionally expose sensitive data.

AI agents amplify traditional security risks.

That’s why this release matters far beyond “just LangChain.”

What QA Engineers Should Learn From This

Most QA engineers still think:

👉 “Security is DevSecOps responsibility”

Not anymore.

Modern SDETs working with AI systems MUST understand:

- Input validation

- File handling risks

- Agent boundaries

- Tool permissions

- Prompt injection risks

Because AI automation is no longer:

“Just testing APIs and UI”

It’s becoming:

👉 Autonomous system engineering

The Bigger Industry Problem

Many AI teams currently build systems like this:

agent = Agent(tools=ALL_TOOLS)Which effectively means:

👉 Unlimited permissions

👉 Broad filesystem access

👉 Weak isolation

That’s incredibly risky.

Key Improvement #1 — Safer File Handling

This patch improves protection against:

- Unsafe path resolution

- Directory traversal attempts

- Unintended file access behavior

For AI engineering teams, this is critical because:

👉 Agents increasingly interact with local environments automatically.

Every AI tool that touches files becomes a potential attack surface.

Key Improvement #2 — Better Security Stability for AI Workflows

This fix also improves confidence for teams building:

- AI testing agents

- Autonomous coding systems

- Multi-agent workflows

- Local execution pipelines

Because secure infrastructure is foundational for:

✅ Enterprise AI adoption

✅ Production AI systems

✅ Safe automation scaling

⚠️ Any Breaking Changes?

Based on official release notes:

✅ No major API changes announced

✅ No migration changes mentioned

✅ No major architectural updates

This appears to be:

👉 A focused security patch release

However…

Teams should STILL validate:

- Tool integrations

- File-based workflows

- Sandbox execution

- CI agent behavior

Because security patches can occasionally expose:

- Previously hidden assumptions

- Unsafe implementation shortcuts

- Compatibility edge cases

Should You Upgrade Immediately?

My Recommendation:

✅ YES — Immediately for production AI systems

Especially if your LangChain workflows involve:

- File operations

- Agent tooling

- Code generation

- Local execution

- Multi-agent systems

Because security vulnerabilities should NOT wait for “next sprint.”

Delayed security upgrades are technical debt with a timer attached.

What Mature AI Engineering Teams Do

Professional teams treat security patches differently from feature releases.

They:

✅ Prioritize CVEs immediately

✅ Validate upgrade impact fast

✅ Run focused regression suites

✅ Review tool permissions

✅ Audit agent capabilities

Because modern AI systems are not simple applications anymore.

They are:

👉 Semi-autonomous execution environments

The Hidden Risk Most People Ignore

Many developers are currently giving AI agents:

- File access

- Terminal access

- Repository access

- Execution capabilities

Without:

- Sandboxing

- Permission boundaries

- Behavioral constraints

That combination is dangerous.

AI Security Is Becoming a New SDET Skill

This is the shift happening RIGHT NOW.

Old SDET mindset:

"Does the feature work?"Modern AI SDET mindset:

"Can the system behave safely under autonomous conditions?"That’s a completely different engineering discipline.

My Recommendation for AI Teams

Minimum Safety Checklist

Before shipping AI agents:

✅ Restrict filesystem access

✅ Sandbox execution

✅ Validate tool permissions

✅ Monitor agent actions

✅ Log all file operations

✅ Review prompt injection risks

👉 This should become standard engineering practice.

🛠️ How to Upgrade

For Python Tools

pip install langchain --upgradeFor Node.js Tools

npm install langchain@latest

🔗 Full Release Notes

https://github.com/langchain-ai/langchain/releases/tag/langchain-core%3D%3D0.3.86

💬 Let’s Talk

👉 Are your AI agents sandboxed properly?

👉 Would your current setup survive a prompt injection attack?

Drop your thoughts below 👇

🔥 Final Line

AI systems don’t fail only because of bad code.

Sometimes they fail because we gave them too much trust.